1.The impact of bad data on business is that it leads to flawed decisions, wasted time, and significant financial loss.

2.Much of the cost of bad data comes from hidden effort spent cleaning, correcting, and validating datasets.

3.Location-based data is especially vulnerable to inaccuracies due to lack of standardization.

4.Even small errors in POI or geometry data can have outsized business consequences.

5.Investing in clean, accurate data upfront reduces risk and improves long-term decision-making.

We know, everybody loves data. There’s definitely no shortage of it these days. Unfortunately, as we’ve said before, not all data is created equal. That’s why we talk about the importance of data standards so often and even created a handy data evaluation checklist to help you make smarter and more informed data choices.

As with many things in this world, there is a form and function to data, too. We talk about its form through the lens of how to make a dataset usable—including the flurry of technical problems that can result from attempting to use dysfunctional datasets.

Data’s function, however, is a bit more of a moving target. With the right data in hand, you can do amazing things. With bad or inaccurate data, you can quickly down the wrong “rabbit hole” and make ill-informed decisions that do more harm than good.

Sadly, bad data gets used all the time, often without organizations even realizing it. And it can lead businesses to draw inaccurate conclusions and make costly long-term errors. This is why, and especially at a time when data is basically everywhere, it’s so important to source the right and most accurate data at all times. Not doing so simply isn’t worth the consequences.

To answer this question, we first need to take a step back and look at the industry’s big picture.

In 2016, the big data market was estimated to be worth $136 billion per year. IBM also found that, in the same year, using bad data could cost the U.S. economy around $3.1 trillion per year, if not more. Just imagine what this number is globally today.

While this clearly paints a horrible picture of negative ROI, that’s not what should concern you. There are two important things at play here: 1) a lot of bad data is circulating around, and 2) too much bad data is being used, consuming valuable resources and leading to poor decisions.

Most bad data is a byproduct of either human error or a lack of data expertise by the people using data to draw insights. As Thomas Redman in the Harvard Business Review puts it:

“The reason bad data costs so much is that decision-makers, managers, knowledge workers, data scientists, and others must accommodate it in their everyday work. And doing so is both time-consuming and expensive. The data they need has plenty of errors, and in the face of a critical deadline, many individuals simply make corrections themselves to complete the task at hand. They don’t think to reach out to the data creator, explain their requirements, and help eliminate root causes.”

Think about how many people in one organization alone are doing things like this. Then multiply that by every organization in the world. The reality of that is truly bleak. But it should come as no surprise that, with so many cooks in the data kitchen, errors slip through the cracks often.

This is what Redman refers to as “hidden data factories,” which illustrates an important point about the economic impact of poor quality data. It’s not merely about the decisions being made that are informed by bad data. Rather, a lot of the waste, financially-speaking, stems from the enormous amount of time spent by data professionals cleaning and organizing data, spotting and fixing errors, and confirming sources. If the data were clean, this would be unnecessary.

For knowledge workers, this kind of ‘quality control’ work can consume up to 50% of their time. For data scientists, that number easily climbs to 60%. In both cases, this kind of manual labor is simply not a good use of their time and an incredulous waste of highly valuable resources.

One study found that 59% of location data is inaccurate while in another study, 25% of respondents said there wasn’t enough clarity around the sources of location data collected.

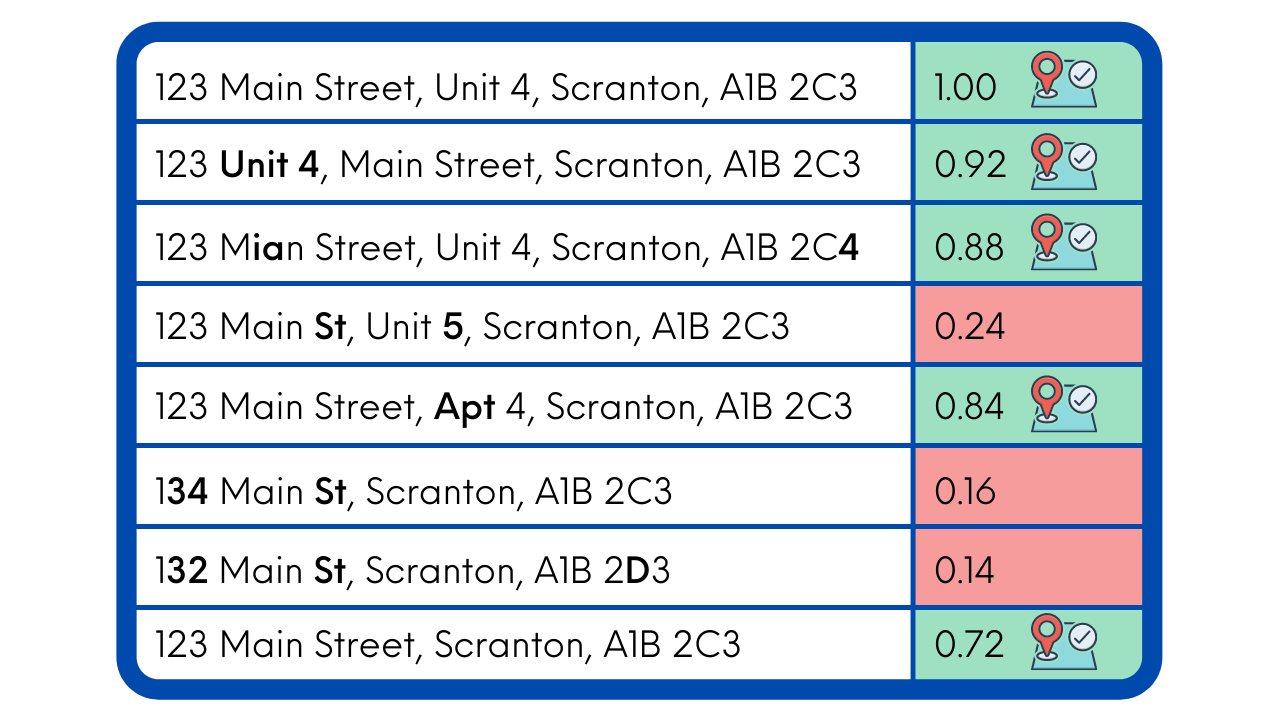

Truth be told, location-based data, whether around points of interest (POI) or building footprints (Geometry), is quite complicated and not always easy to work with. One of the biggest problems with these datasets is that—up until recently, thanks to Placekey — building addresses and specific geographic locations have not historically been standardized. This makes it easier to miss important and timely details, such as if a business is open or permanently closed. This has been a major sticking point for anyone working with location data during the pandemic.

Taking it a step further, looking at building geometry, for example, if the polygons aren’t built to accurate dimensions, it can create a domino effect of inaccuracies and inconsistencies that can, when coupled with flawed POI data, make it impossible to measure foot traffic accurately.

Different businesses use location-based data in different ways. Retailers use it in trade area analysis to make revenue-impacting decisions around site selection and de-selection. Financial analysts use it in investment research or company valuations. Marketers across all sectors rely on it heavily to inform how, when, and where they place digital ads, OOH ads, and mobile ads.

Unfortunately, one kink in the chain can spell disaster and flush a lot of money down the drain. Let’s take retailers as an example. Bad data used to inform site selection may have told you that a brick-and-mortar location being considered was near a complementary business, one that could create a beneficial organic foot traffic “halo effect” for your business. But again, the data was not up-to-date and failed to reveal that this business, potentially the factor that got you to lean towards this location in your decision-making, may have actually been closed for three months—but you don’t realize it until you start setting up shop. The revenue impact from the loss of shared foot traffic alone could turn a once smart investment into a total “lemon.”

As another example, let’s look at how this affects marketing. You might decide to launch a mobile ad campaign that triggers promotional messaging to consumers when they’re within a pre-determined geofenced area. But what if the geofence was created using bad data? Well, for starters, it could trigger notifications prematurely, when consumers are not within ideal proximity to your business. This creates an incongruent and confusing customer experience.

This list of examples is endless, but the key takeaway here is simple. Even the slightest inaccuracies in location-based data can cause costly errors that can’t be recouped.

Continuing with the marketing example for inspiration, it was found that 62% of organizations use marketing data that is up to 40% inaccurate to plan their advertising and communications campaigns. But they still do this in spite of the fact that 94% of businesses have said that they suspect their customer data is inaccurate. It starts to make you wonder: If so many people are knowingly aware that they’re using bad or suspicious data, why do they keep on using it?

Not that this is a good excuse for doing so, but letting bad dictate business decisions is simply the path of least resistance. Sometimes businesses just need data and insights in a pinch and don’t have the time or resources to ensure that it’s 100% clean and accurate.

Of course, that’s bound to happen from time to time. But this kind of oversight should be the exception and never the rule. Using bad data like this creates a slippery slope around everything you do, from marketing campaigns, customer acquisition efforts, resource management, business expansion, investment choices, or pretty much anything else that data can inform. And the errors attributed to bad data can waste valuable marketing dollars, minimize conversions, increase customer acquisition costs, tank profits, and well beyond.

Simply put, taking action on poor quality data is akin to expecting someone to make good on empty promises. If the data isn’t clean and accurate from the start, you can’t go into a business decision or expect a specific outcome with a high degree of confidence. If anything, just anticipate the worst and, if all goes well in the end, be pleasantly surprised by a positive end result. But why leave it up to chance when you could just get it right the first time?

At SafeGraph, our mission is to make it easier than ever to access clean and accurate data. Our team never compromises on quality to ensure that your business or organization can glean meaningful, relevant, and actionable insights—based on quantitative truth—to help make more informed decisions, allocate budgets and resources wisely, and even spark new innovations.

Our entire SafeGraph Places dataset is expertly curated by our team every month so that the data is always up-to-date and immediately usable on your end. That means there’s less time (and money) wasted by your internal resources to clean the data, which conversely, gives you more opportunities to improve your marketing, sales, and business analytics over time.

To see what it’s like to work with truly clean and accurate data—and why it makes all the difference—schedule a demo with a SafeGraph expert.

1.What is considered bad data?

Bad data includes inaccurate, outdated, incomplete, duplicated, or poorly structured information that leads to unreliable insights.

2.How does bad data affect business decisions?

It can result in poor site selection, ineffective marketing campaigns, misallocated budgets, and incorrect investment decisions.

3.Why is location-based data especially prone to errors?

Location data lacks universal standards, making address matching, POI status, and geometry accuracy difficult to maintain without rigorous validation.

4.Why do companies continue using bad data despite knowing the risks?

Time pressure, limited resources, and short-term needs often push teams to rely on imperfect data rather than validate it thoroughly.

5.How can businesses reduce the impact of bad data?

By sourcing data from reliable providers, prioritizing accuracy and freshness, and minimizing internal data-cleaning overhead.